Introducing

Hi

I'm Abraham Aroloye

I started as a self-taught web developer and over the years shifted my focus entirely to data engineering. What pulled me in was the challenge of building things that are reliable, scalable, and actually useful at a production level.

Pipeline Orchestration

Designing and managing complex DAGs with dependencies, ETL/ELT and scheduling in Airflow.

Python & SQL

Building ingestion clients, data transformation scripts with clean, maintainable code, and query tuning across multiple platforms.

Data Warehouse & Database

Using PostgreSQL including JSONB for semi-structured data, Snowflakes for analytical workloads and Databrick for heavy distributed processing

Infrastructure & DevOps

Containerised deployments, webhook integrations, and production system management in Docker.

Why Me?

I build data systems, not just scripts

I started as a self-taught web developer and over the years shifted my focus entirely to data engineering. What pulled me in was the challenge of building things that are reliable, scalable, and actually useful at a production level.

My background spans ETL/ELT pipelines, medallion architectures, REST API integrations, data quality enforcement, and containerised deployments. I think in systems. I care about idempotency, change detection, and making sure data is trustworthy before it reaches anyone downstream.

Right now I am targeting Analytics Engineer and Data Engineer roles where I can work with dbt, modern cloud warehouses, and teams that care about doing data modelling properly.

End-to-end pipeline delivery: From API ingestion to transformed, tested, documented data in Snowflake

End-to-end pipeline delivery: From API ingestion to transformed, tested, documented data in Snowflake Data quality enforcement: Great Expectations checks and SHA-256 change detection on every pipeline run

Data quality enforcement: Great Expectations checks and SHA-256 change detection on every pipeline run Containerised & production-ready: Docker-based deployments with Airflow orchestration and webhook integrations

Containerised & production-ready: Docker-based deployments with Airflow orchestration and webhook integrations dbt modelling with incremental strategies: Dimension tables, fact tables, tested models and proper documentation

dbt modelling with incremental strategies: Dimension tables, fact tables, tested models and proper documentationData Engineering

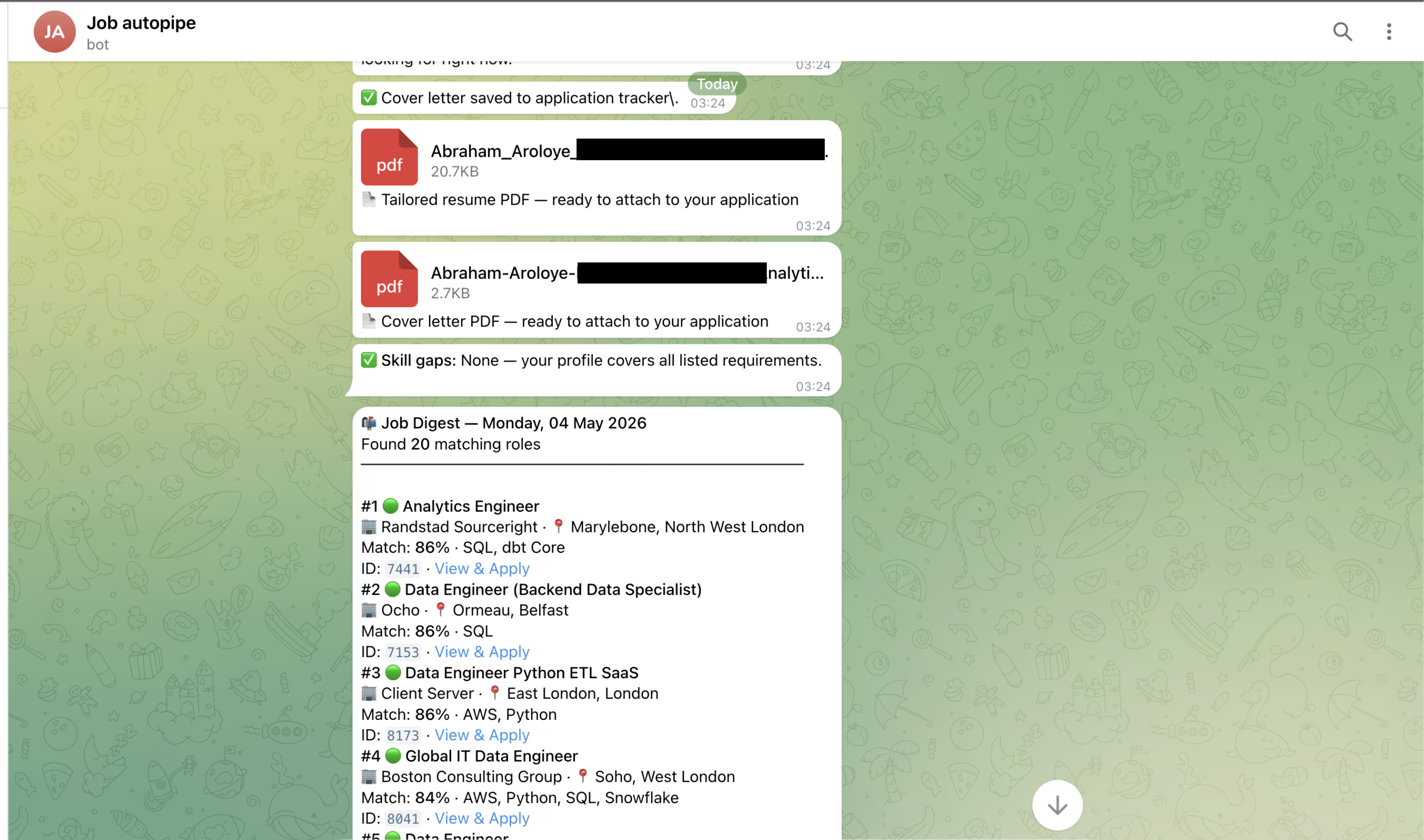

Job Search AutoPipe 🔍➡️📊➡️✉️

Project description

I got tired of applying for jobs manually so I built a pipeline to do it for me. Honestly this started out of frustration. I was spending hours every week copying job descriptions, tweaking my resume, writing cover letters that all felt the same. As a data engineer I kept thinking there has to be a better way. So I just started building. I called it JobSearch AutoPipe and it has changed how I apply for roles completely. Here is what it does. Every single day it pulls jobs automatically from Adzuna and Reed APIs across the whole UK. It runs quality checks on every job using Great Expectations to make sure the data is clean. It classifies the roles based on what I am actually looking for. Then it sends me a clean digest straight to my Telegram. No more scrolling through job boards. No more copy pasting. When I see a role I like I just type /flag and the job ID. That is it. Within seconds the system builds me a full application pack. A tailored resume PDF with bullets picked specifically for that job description.

Data Engineering

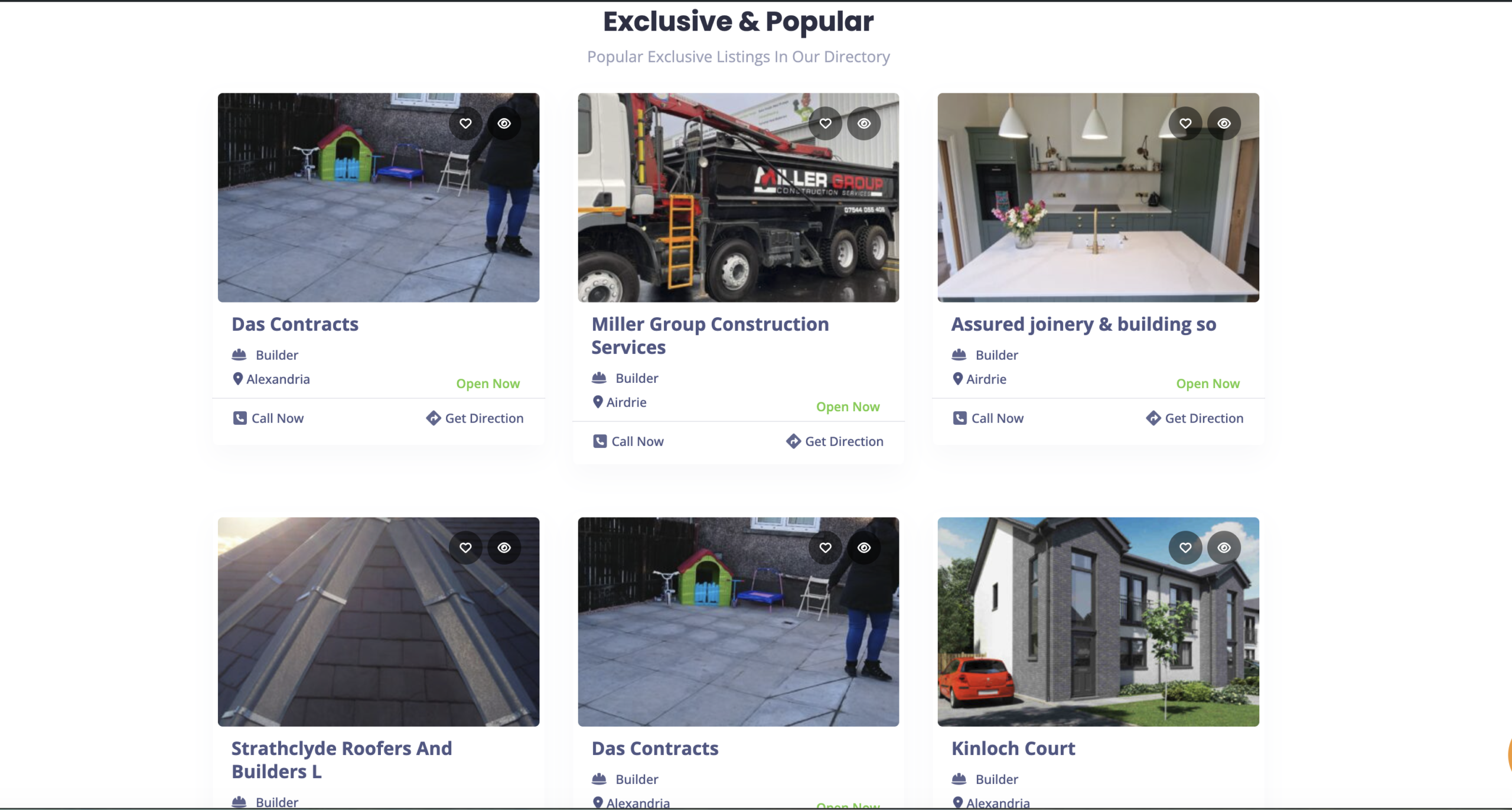

Glasgow Traders AutoPipe

Project description.

My data pipeline automate the entire process with just a click on a button.

I changed a few keywords in my script, updated the category, and set a strict rule, Then, I hit "run," stepped away from my laptop, and went to make a cup of coffee.

While I was drinking that coffee, my script went to work. It quietly searched through all 107 locations, filtered out the bad data, checked for duplicates, and successfully published almost 1,600 brand new, fully detailed electrician listings to our live website.

Zero duplicates. Zero crashes. Just an hour and a half of the computer doing the heavy lifting in the background.

Time and Cost Expectations

Time: At roughly 3 to 4 seconds per listing (to download up to 4 photos, hit the API, and upload the metadata), this batch will take about 80 to 100 minutes to complete.

Data Engineering

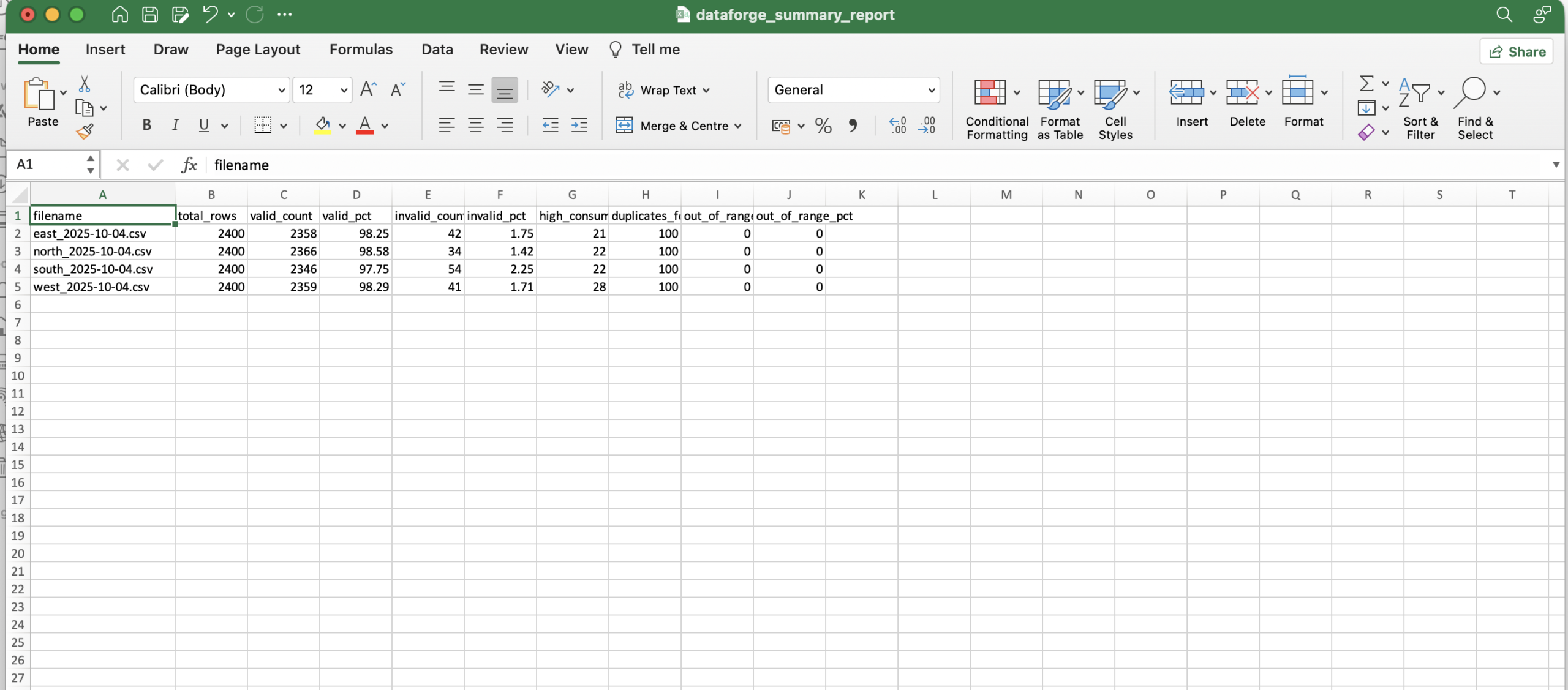

DataForge Energy — Daily Load Analyzer

Project description:

A Python data pipeline that ingests, validates, and summarises daily energy meter readings from multiple regional CSV files.

DataForge Energy collects hourly power consumption data from smart meters across four regions. Raw data arrives daily with quality issues including missing values, duplicate meter IDs, negative readings, and out-of-range timestamps. This pipeline automates detection and reporting of these issues.

Raw CSV Files (Bronze) ↓ Data Validation & Cleaning (Silver) ↓ Regional Aggregation & Anomaly Detection (Gold) ↓ JSON + CSV Summary Report (Output)